Real World Object Recognition – Just Like Terminator

Table of contents

Table of contents

When we think about the future of computer vision, we think about the ability for artificial intelligence (AI) to recognize objects in the real world, in real-time, much the same way as humans do. Every time we start talking about this concept of real-world object recognition, the listener will almost always say “you mean like the one in Terminator“. Yes, exactly like the one in Terminator! AI algorithms should be able to identify objects as you pan over them with a hand-held scanner (or through augmented reality glasses). The scanner then reads out loud what it sees as you’re pointing it at things.

Real-World Object Recognition

Think about how useful that would be to a blind person with headphones who is navigating around by listening to the audio output. That scanner may soon be your smartphone. While this may be useful for blind people, it’s also useful for those of us who want to identify things that you can’t exactly put in a search engine. Forgetting about the logistics of sticking your smartphone in some wild animal’s face, here are some things that we’d like our smartphone to identify:

- Wild animals (it happens)

- Plants or flowers you come across when hiking

- Mushrooms you may want to throw in your bordelaise

- Birds for bird watchers

- Fish in the fishmongers

- Weird looking vegetables in the Asian market

- Weird looking Asian characters (especially for expats)

- Weird looking Asians

Don’t laugh at the last one. Computer vision is actually being used to build facial profiles based on the way people look. There’s an Israeli company called Faception which uses profiling to determine if you’re a terrorist or a pedophile based solely on the way you look. No joke.

Apparently, they have already signed a $750,000 contract with the Department of Homeland Security. This may be one of the most controversial things we’ve ever read about, so naturally, you’re going to see another article from us on this topic soon. Anyways, back to our list of requirements.

Outside of people that live in or near Chinatown, the other items on our list seem to best suit some mushroom-picking, bird-watching hipster who loves to cook with locally sourced ingredients and lives in the mountains. That description actually fits some of our writers (sans ridiculous beards and skinny jeans) so we thought we’d take a closer look at this space to see if any apps exist for our shopping list.

There are a few things we need to consider when we’re talking about building real-world object recognition or as we like to call it, “the Terminator app”. Objects that are relatively still can be analyzed using image recognition algorithms that we already have today. If an object is generally still, you can take a photo of it which can then be analyzed by current technology on offer from companies like Cloudsight, Clarifai or Blippar. All we need to do is take their technology and optimize it so that we can identify more obscure objects. Let’s take a look at what apps are already out there for the examples we mentioned earlier.

- Plants – There’s an app for this called “Leafsnap”. Take any leaf and put it on a white background. The app will then identify the species. Turns out there are quite a few apps that can do this for trees, plants, and flowers.

- Birds – There’s an app for this called Merlin which can identify 650 different North American birds.

- Shrooms – We were all excited when we saw a link titled “The best Android apps for mushroom hunting” until we saw that none of these apps could actually identify mushrooms in the real world. What good is that? There must be a huge market for such an app so you can have that startup idea for free. Then let us know so we can add the link (INSERT COOL MUSHROOM APP LINK HERE).

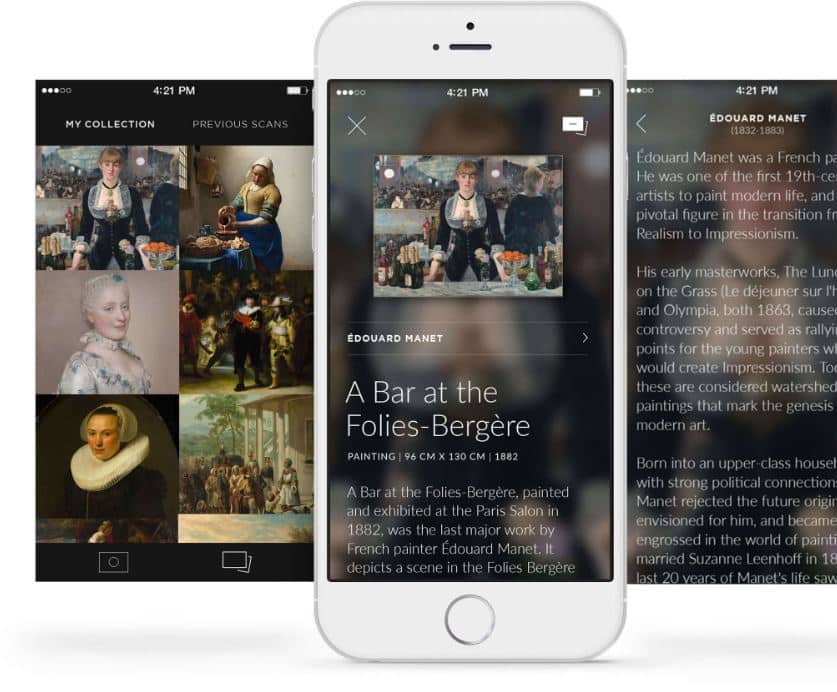

- Art – There is a very cool app for this already called Smartify. Look:

- Art on Shrooms – If you’re on mushrooms in an art museum and totally confused, the Smartify app can really come in handy.

- Dead Fish – Again, we got all excited and clicked the “Best fish identification apps for ios (Top 100)” link. Is it too much to ask that one of these apps identifies dead fish in real time so we use it at the fish market? If you have developed an app and not properly marketed it, send us the link so we can help you with that.

- Vegetables – We couldn’t find an app specifically for vegetables, but did find the question raised in a Clarifai forum. Apparently, Clarifai is working on a “food model” for their image recognition platform.

These apps are cool and all but what about identifying objects as they are being seen? We want the same “real-time” object recognition except for moving objects.

What you’ll see in this video is the type of “Terminator app” that we’re looking for. The technology on display in that demo comes from a machine learning startup called TeraDeep which uses a “Field Programmable Gate Array” or FPGA instead of the Nvidia GPUs we’ve talked about before. This promises faster analytics at half the power, ideal for devices that need to conserve power – like smartphones for example.

UPDATE : 06/23/2018 – TeraDeep website no longer exists.

Ideally, you want your scanner to identify objects in a matter of milliseconds and then make observations about complex situations like don’t eat that mushroom because it will kill you. The ability for an application to identify objects that are moving around in the real world is pretty much the same technology you would need to do this in video. As it turns out, during a recent conference in San Francisco last week, Google unveiled a new machine learning offering called Cloud Video Intelligence. From the article by Digital Trends:

Developers can now create applications capable of detecting objects within video and making them searchable and discoverable. Both nouns and verbs can be applied to those objects, such as “dog” and “run.”

Conclusion

Now here’s the real litmus test for any powerful computer vision object recognition platform like the one Google is building. Can it do everything we just described above on a single app using a single device in real-time? Because if such an app ever comes out, it will be a must-have tool for any human.

In the future, you will be able to use whatever hardware device that ends up being chosen (glasses probably) that will describe anything we’re looking at and overlay it with useful information (yes, just like Ah-nold in Terminator). That way, when you’re out taking a stroll in the woods, you can learn all about the flora and fauna which cover this amazing planet that we hopefully don’t end up destroying.

Sign up to our newsletter to get more of our great research delivered straight to your inbox!

Nanalyze Weekly includes useful insights written by our team of underpaid MBAs, research on new disruptive technology stocks flying under the radar, and summaries of our recent research. Always 100% free.