An Artificial Intelligence Definition for Beginners

Table of contents

All-natural and organic are familiar terms to consumers, and anything artificial has become anathema to many. Unless we’re talking artificial intelligence – or AI – then investors should be hungry to learn as much as possible about a technology that is becoming as ubiquitous as organic tofu.

The vast majority of nearly 2,000 experts polled by the Pew Research Center in 2014 said they anticipate robotics and artificial intelligence will permeate wide segments of daily life by 2025. A 2015 study covering 17 countries found that artificial intelligence and related technologies added an estimated 0.4 percentage point on average to those countries’ annual GDP growth between 1993 and 2007, accounting for just over one-tenth of those countries’ overall GDP growth during that time.

Interesting numbers – but just what is artificial intelligence? And are robots with AI going to enslave humanity?

We can’t answer the second question, but here’s a good working artificial intelligence definition from a recent U.S. government report called “Preparing for the Future of Artificial Intelligence”:

“Artificial intelligence is a computerized system that exhibits behavior that is commonly thought of as requiring intelligence.”

Or more technically speaking, AI is a “system capable of rationally solving complex problems or taking appropriate actions to achieve its goals in whatever real-world circumstances it encounters.” In a way, artificial intelligence is about understanding – then recreating – the human mind. And AI is not just about designing computers that mimic how we think, learn and process information, but also how we perceive and feel about the world around us.

Understanding the world of AI only begins with a simple artificial intelligence definition. There’s a whole universe of terminology we need to explore in order to understand the domain before we can invest in it.

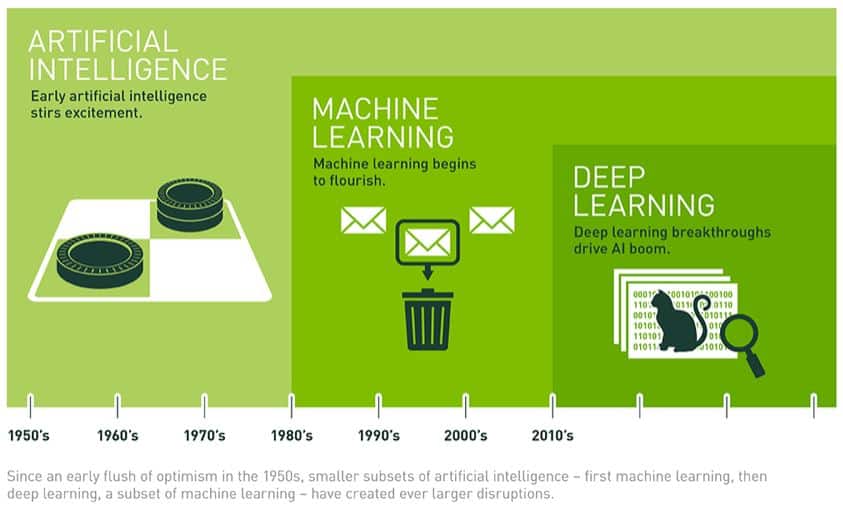

Machine learning

Machine learning is about how computers with artificial intelligence can improve over time using different algorithms (a set of rules or processes), as it is fed more data. AI machines learn by recognizing trends in data that allow it to make decisions. For example, designing autonomous vehicles involves building machines that learn to navigate. A system may use pattern recognition algorithms from which it learns, for instance, to identify pedestrians from vehicles from animals, so that it knows when to hit the brakes when it sees a cat or a zebra, even if it never encountered the latter because it has learned to identify animals accurately. Regular readers will recall a previous article we wrote on this topic titled “Deep Learning And Machine Learning Simply Explained” which gives an example of how this works in practice.

Neural networks

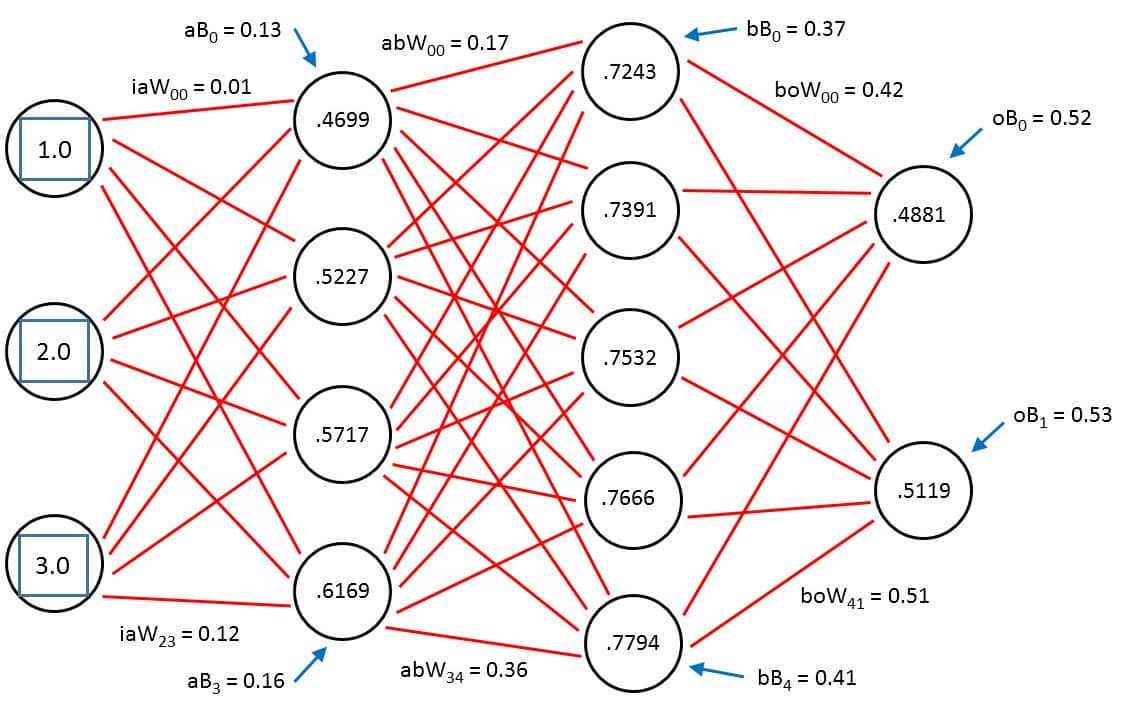

A type of machine learning, neural networks are superficially based on how the brain works. There are different kinds of neural networks – feedforward and recurrent are a couple of terms that you may encounter – but basically, they consist of a set of nodes (or neurons) arranged in multiple layers with weighted interconnections between them. Each neuron combines a set of input values to produce an output value, which in turn is passed on to other neurons downstream as seen in the example below:

From an example from the U.S. government report: In an image recognition application, a first layer of units might combine the raw data of the image to recognize simple patterns in the image; a second layer of units might combine the results of the first layer to recognize patterns-of-patterns; a third layer might combine the results of the second layer; and so on. We train neural networks by feeding them lots of delicious big data to learn from.

Deep learning

Deep learning is simply a larger neural network. Deep learning networks typically use many layers – sometimes more than 100 – and often use a large number of units at each layer, to enable the recognition of extremely complex, precise patterns in data. The below diagram shows how deep learning fits into the bigger picture:

Some successful applications of deep learning are computer vision and speech recognition (also referred to as natural language processing).

Computer vision

Computer vision might sound like the latest 3D eyewear, but it’s actually a field of research for designing machines with the ability to process, understand and use visual data just as humans do. The eyes, in this case, usually consist of a camera. Autonomous vehicles are one obvious example. Another one that may be sitting in your living room with a teenager right now is a device called Kinect for Xbox. Kinect uses a sophisticated system for motion-capture that allows a user to interact with the computer without the use of a controller.

Natural language processing

Natural language processing, as defined by aitopics.org, “enables communication between people and computers and automatic translation to enable people to interact easily with others around the world.” Those who belong to the Apple cult are familiar with one version of this artificial intelligence – Siri. Output and input can be verbal or written. Other terms that fall into this category include natural language understanding and natural language generation.

Affective computing

For some, it’s not enough to imbue computers with artificial intelligence. An emerging field called affective computing works to give our electronics emotional intelligence, whether to understand a human user or to influence one emotionally. The best way to learn more about this exciting application of artificial intelligence is to read our article on “Affective Computing and AI Emotion Recognition” which talks about an interesting startup in this space called Affectiva.

GPUs

Many neural networks for artificial intelligence are powered by what’s called graphics processing units (GPUs). Pioneered in 2007 by a company called NVIDIA, GPUs basically help computers work much faster than those operating with a central processing unit (CPU) alone. Some companies have built their own versions of GPUs. For example, Google being Google, the technology giant has a chip it calls the Tensor Processing Unit, or TPU, that supports the software engine (TensorFlow) that drives its deep learning services, according to Wired magazine. There are also a handful of startups working on building AI chips.

Cognitive computing

Cognitive computing is one of those terms that has fairly recently entered the AI lexicon. One of the definitions for cognitive computing – a term that some attribute to IBM with the birth of Watson, the computer Jeopardy champ – that seems to be prolific around the inter-webs sums it up thus: “Cognitive computing involves self-learning systems that use data mining (i.e., big data), pattern recognition (i.e., machine learning) and natural language processing to mimic the way the human brain works.” Katherine Noyes writes in Computerworld that cognitive computing “deals with symbolic and conceptual information rather than just pure data or sensor streams, with the aim of making high-level decisions in complex situations.”

Conclusion

That all sounds a lot like artificial intelligence, eh? In a blog by technology market analysis company VDC Research, the difference between artificial intelligence and cognitive computing boils down to the idea that the former tells the user what course of action to take based on its analysis while the latter provides information to help the user to decide. Or perhaps, as Noyes implies in her conclusion, cognitive computing might just be clever repackaging of artificial intelligence. After all, even the best AI can’t outsmart old-fashioned human marketing.

Sign up to our newsletter to get more of our great research delivered straight to your inbox!

Nanalyze Weekly includes useful insights written by our team of underpaid MBAs, research on new disruptive technology stocks flying under the radar, and summaries of our recent research. Always 100% free.

“Those who belong to the Apple cult…”

Seriously? A person who buys a product “belongs to a cult”?

I never heard of Nanalyze before, but I now know not to waste any time on it.

Thank you for the comment J E.

It’s a bit of a light hearted jab at Apple users and not something people should take seriously.

Which reminds me of another joke. You know how to tell someone is an Apple user?

Don’t worry, they’ll let you know 😉

very well explained! Very brief and nice post! AI is growing! No doubt on this! But do you really think this technology achieves super intelligence in future? If yes when?

Thank you for your kind words. It depends on how you define “super intelligence” but that would certainly be far greater than human intelligence. There are all kinds of estimates as to when we’ll hit human intelligence but by most counts we’re progressing faster than we anticipated.

Well written article. Indeed.. and very well said “even the best AI can’t outsmart old-fashioned human marketing”..

Thank for for that positive feedback! Jobs may go away but there will always be things only humans can do well.

Can a computer cook a five course meal?

Good point.

I hope my comments here will help those who are a little in the dark as to what AI actually means and just how AI compare’s to what we call Human Intelligence.

The key here to understanding things is in the name, ‘Artificial’ Intelligence, in that it is something that is artificial, compared to say Human Intelligence which I think we all can acknowledge is not artificial. So the key question here is what do we mean by Human Intelligence? Here I think we are talking about an Intelligence that is backed by consciousness.

Will Artificial Intelligence ever reach the level of Human Intelligence?… No because it is not and never will have consciousness, real or true intelligence comes from the existence of a level of consciousness. When we speak of AI we are talking about an intelligence with no consciousness, therefore it is called Artificial for a reason.. The conclusion is AI is merely a trick and will forever remain so.

Now will AI become useful to humanity, I think yes, will it be something worth considering as an investmen? Again I think yes. However please understand AI is only going to be as good as it’s programming, because it is essentially like any other computer software in that it has to be programmed to do something or to process information in a certain way, in this case it is programmed intelligence.

And just for fun, will AI be a threat to humanity?… The answer I think is only if it is programmed to be so.

I hope my comments here will help those who are a little in the dark as to what AI actually means and just how AI compare’s to what we call Human Intelligence.

The key here to understanding things is in the name, ‘Artificial’ Intelligence, in that it is something that is artificial, compared to say Human Intelligence which I think we all can acknowledge is not artificial. So the key question here is what do we mean by Human Intelligence? Here I think we are talking about an Intelligence that is backed by consciousness.

Will Artificial Intelligence ever reach the level of Human Intelligence?… No because it is not and never will have consciousness, real or true intelligence comes from the existence of a level of consciousness. When we speak of AI we are talking about an intelligence with no consciousness, therefore it is called Artificial for a reason.. The conclusion is AI is merely a trick and will forever remain so.

Now will AI become useful to humanity, I think yes, will it be something worth considering as an investmen? Again I think yes. However please understand AI is only going to be as good as it’s programming, because it is essentially like any other computer software in that it has to be programmed to do something or to process information in a certain way, in this case it is programmed intelligence.

And just for fun, will AI be a threat to humanity?… The answer I think is only if it is programmed to be so.

Will memeristor play a role in artificial intelligence

For our readers (and us too) – Memristors are basically a fourth class of electrical circuit, joining the resistor, the capacitor, and the inductor, that exhibit their unique properties primarily at the nanoscale.

Looks like they haven’t really taken off ($3 million market?). Right now watch FPGAs and GPUs.

https://nanalyze.com/2017/05/xilinx-investing-fpgas-ai-hardware/

https://nanalyze.com/2017/05/investing-gpus-ai-amd-vs-nvidia/

Will memeristor play a role in artificial intelligence