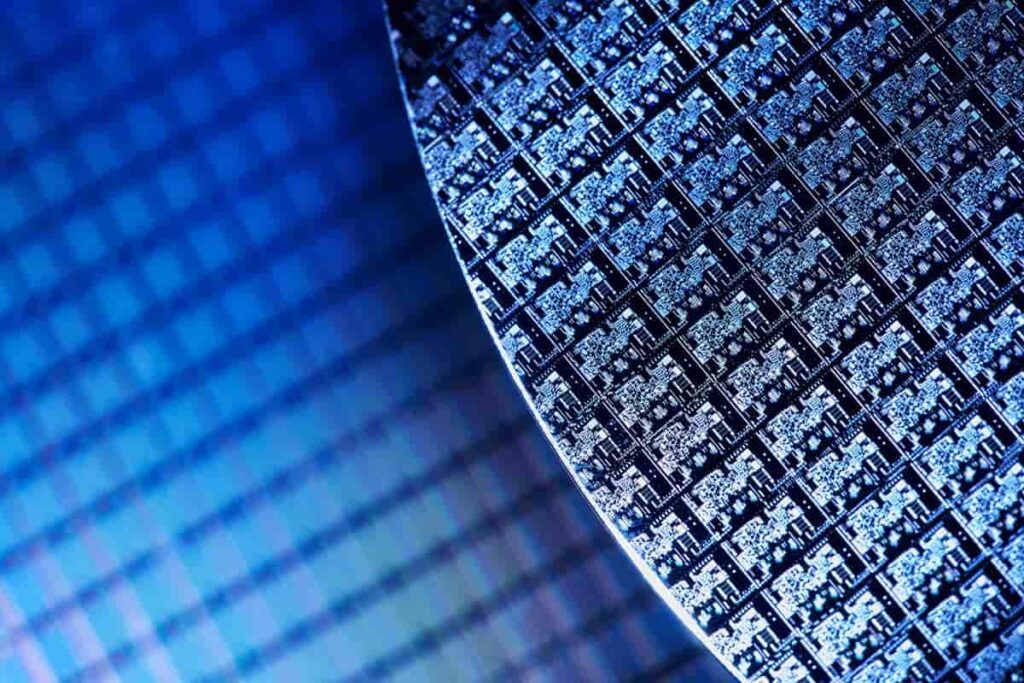

How AI Chips Are Changing the Semiconductor Industry

Table of contents

Our previous series on the global AI race highlighted how mastering artificial intelligence has become a geopolitical competition. Nearly all developed countries are now talking about how much they plan to focus on improving their AI capabilities. While others plan to plan, China and the U.S. seem to be vying for leadership, each country seeing their relative position through their own lens. When it comes to who controls the picks and shovels, one country has the upper hand.

“…the complex supply chains needed to produce leading-edge AI chips are concentrated in the United States and a small number of allied democracies…”

CSET Report – AI Chips: What they Are and Why They Matter

That’s just one of the interesting insights you’ll find in a report released this month by the Center for Security and Emerging Technology (CSET) titled “AI Chips: What they Are and Why They Matter.” Founded just last year, CSET is a privately-funded think tank that provides U.S. policymakers with “nonpartisan analysis of the security impacts of emerging technologies.”

Since everyone is too busy binging shoddy Netflix shows working from home to read a 72-page report, we pulled out the key points which show how AI chips are quickly changing the entire semiconductor manufacturing landscape. We’ll quickly acknowledge the authors of the report who did all the heavy lifting – Saif M. Khan and Alexander Mann, thanks lads, you rock – and then proceed to use all their hard work to quickly bring our readers up to speed on where AI chips are heading so we appear to be experts on the topic. As MBAs, leveraging other people’s hard work is one of the things we do best.

Specialized AI Chips

For the purposes of this article, we will use the definition of AI as “cutting-edge computationally-intensive AI systems, such as deep neural networks.” That’s also the definition used by the CSET report which goes on to say that “training a leading AI algorithm can require a month of computing time and cost $100 million.” Consequently, researchers are now turning to “specialized AI chips” which are designed to accomplish specific tasks. Turns out that using specialized chips can be thousands of times cheaper than using general-purpose chips. Now seems like a good time to talk about the various types of AI chips and what they’re used for.

AI Chips: Training vs. Inference

Like any other piece of software, AI algorithms take inputs – loads of delicious big data in this case – and use them to produce useful outputs. Garbage in, garbage out. That’s why having quality proprietary big data sets is a competitive advantage. That free app you downloaded to your smartphone is helping provide a clean stream of big data for someone’s AI algorithms to munch on.

If you give an AI algorithm 10,000 cat pictures with each cat clearly labeled, the algorithm could then begin to determine whether or not any picture contains a cat with a degree of certainty. The process of showing the AI algorithm cat pictures is called “training.” When the algorithm switches from being trained to making predictions about whether or not a photo contains a cat, it moves from “training” to “inference.” To infer is to “deduce or conclude (information) from evidence and reasoning rather than from explicit statement.” That’s exactly what we train AI algorithms to do.

Training an AI algorithm is an ongoing process. They get better the more they are used. When there are edge cases where an AI algorithm is uncertain about its decision, a human in the loop can provide the answers. Consequently, the algorithm gets just a little bit better. In this way, AI algorithms are continuously improving over time.

3 Main Types of AI Chips

Now that we understand the two main ways in which AI chips are used – training and inference – we can define three categories of chips and some major producers of each.

- Graphics Processing Unit (GPU) – (Xilinx or Nvidia) – used primarily for training AI algorithms

- Field Programmable Gate Array (FPGA) – (Intel and Xilinx) – used primarily for inference

- Application-Specific Integrated Circuit (ASIC) – (Google, Tesla, Cerebras, Intel) – used for both training and inference

Try not to get sidetracked by all the other nomenclature you’ll see used. Custom-built ASICs for AI often go by other names, such as tensor processing units (TPUs), neural processing units (NPUs), and intelligence processing units (IPUs). For the purposes of this article, we’ll stick with talking about the three main categories – GPUs, FPGAs, and ASICs.

GPUs and – to a lesser extent – FPGAs are widely commercialized because they are general-purpose chips. They can be used for a wide variety of applications. The downside to general-purpose chips is that they require a great deal of research and development for each incremental improvement. If you’re building a chip for many uses, you need to consider lots of variables during the design process.

On the other hand, ASICs are designed around a single algorithm (or set of algorithms) and are therefore easier to optimize. They’re also highly customized, and consequently, more expensive to produce since they are ordered in smaller batches. If better algorithms are developed, ASICs quickly become obsolete, while a GPU or FPGA might be re-purposed. Here’s a great table that sums up how these three types of chips compare to your bog-standard central processing unit (CPU) as a baseline benchmark for efficiency and speed.

If you’re a startup spending millions to train and release an algorithm ahead of your competitors, speed and efficiency are paramount.

AI Chips of All Sizes

In a previous piece titled “Invest in Many AI Chips with One Stock,” we noted four main areas where AI chip growth will come from:

- Mobile – All premier smartphones will offer AI processing capabilities by 2021

- Data Center – Over half of all enterprises will use AI accelerators in their server infrastructure by 2022

- Auto – Autonomous vehicles will be produced in volume by 2020

- IoT – Over one-fifth of IoT devices will have AI capabilities by 2022

Each of these application areas will need AI chips with varying degrees of size and strength. On the upper end you have expensive data center chips, and on the lower end you have less costly AI chips located at-the-edge with smartphones accounting for over a billion AI chips by 2024, according to Deloitte.

Soon, specialized AI chips will be integrated into just about all electronics systems, and these AI chip market-share increases will come at the expense of non-AI chips.

Companies looking for a competitive advantage are now designing their own chips in-house. Both Google and Tesla have built their businesses around ASICs that are highly customized to the AI algorithms they have developed. And it’s not just large companies. Listed alongside Google, Intel, and Tesla is a stealthy startup called Cerebras which we first came across three years ago in our piece on 12 AI Hardware Startups Building New AI Chips. Cerebras has developed the biggest chip ever made – the Cerebras CS-1 – which boasts 1.2 trillion transistors. Below, you can see this giant chip sitting next to the biggest GPU available which contains just 21.1 billion transistors.

As for the world’s biggest chip maker, Intel (INTC), earlier this year they talked about how they generated $3.8 billion in AI revenue in 2019. To put that number in perspective, Intel’s total revenues for 2019 were $72 billion, so around 5.3% of that can be attributed to AI. The last earnings call was rife with mentions of AI, and the CEO talked about how Intel is “investing to lead with a strong portfolio of products,” such as their acquisition of Habana Labs, “a leading developer of programmable deep learning accelerators for the data center.” That’s because Intel doesn’t have much choice.

AI Chips and Moore’s Law

In the semiconductor industry, Moore’s Law is a rule of thumb that says the number of transistors in a chip should double every two years. In other words, the power of chips should grow exponentially, and while that was the case for a while, it’s not anymore.

Today, leading chips contain billions of transistors, but they have 15 times fewer transistors than they would have if Moore’s Law had continued.

CSET Report – AI Chips: What they Are and Why They Matter

Moore’s Law has arguably made society what it is today, and it’s gradually fizzling out. Since chip makers can no longer improve the hardware, they’re now looking to improve the software.

AI is becoming so pervasive that AI chips are now displacing semiconductors. Highly customized AI chips are being built that use their own programming languages that are optimized for AI algorithms. Some chips have a single AI algorithm embedded inside for maximum speed. Others use fewer transistors to accomplish the same thing. The extent to which these AI chips are outperforming standard CPUs is remarkable.

An AI chip a thousand times as efficient as a CPU provides an improvement equivalent to 26 years of Moore’s Law-driven CPU improvements.

CSET Report – AI Chips: What they Are and Why They Matter

In other words, it’s simply impossible to deploy an enterprise-level AI algorithm – at least one that can create exponential improvements to drive exponential returns – without specialized AI chips. Then, there’s the cost of computing power, the primary cost driver for AI labs that need to utilize huge amounts of computing power from cloud providers. A more efficient chip uses less computing power, and chip makers are finding that end-users will pay a huge premium for specialized AI chips. The semiconductor industry now needs quicker development life-cycles while incorporating client-specific customizations into the process. Since one size doesn’t fit all, chipmakers can no longer enjoy the economies of scale that resulted from producing large quantities of a single chip.

Conclusion

With Intel attributing just 5% of their revenues to AI chips, we have a long way to go before AI chips just become what the semiconductor industry produces. Since AI algorithms are capable of constant improvement, they offer a viable substitute for Moore’s Law which has stalled due to the limitations of physics. As for geopolitics, ‘Murica controls key parts of the semiconductor supply chain but China hopes to change that.

Since we’re now moving from hardware improvements driven by Moore’s Law to software improvements driven by custom AI chip designs, retail investors may want to look at investing in design software used by the semiconductor industry. In our recent article titled “Invest in Many AI Chips with One Stock,” we look at an $18 billion leading provider of software tools used by every major manufacturer in the semiconductor industry.

NVIDIA is one of the core holdings in our tech stock portfolio along with four other AI stocks. The complete list of disruptive tech stocks and ETFs we’re holding can be found in the “Nanalyze Disruptive Tech Portfolio Report,” now available for all Nanalyze Premium annual subscribers.

Sign up to our newsletter to get more of our great research delivered straight to your inbox!

Nanalyze Weekly includes useful insights written by our team of underpaid MBAs, research on new disruptive technology stocks flying under the radar, and summaries of our recent research. Always 100% free.