How to Identify Fake News Using AI

Table of contents

Digital media is an extremely tough game these days, and last year saw all kinds of consolidations and layoffs making us wonder just how good of an idea it is to be doing all this research and giving it away for free. Fortunately for us, it’s a game where the competition is growing increasingly weaker. We’re not just talking about the herd getting thinned out, we’re talking about the complete and utter tripe that gets passed off as “journalism” these days.

Nothing is more annoying than an “article” that is some variation of “so-and-so said X, and the Internet broke” garbage, which is usually something someone said that probably doesn’t bear repeating, followed by seven pages of tweets pulled right from Twitter, half of which are authored by people who don’t know anything about capitalization, grammar, or spelling. It’s completely embarrassing, but now that seems to be the norm in journalism.

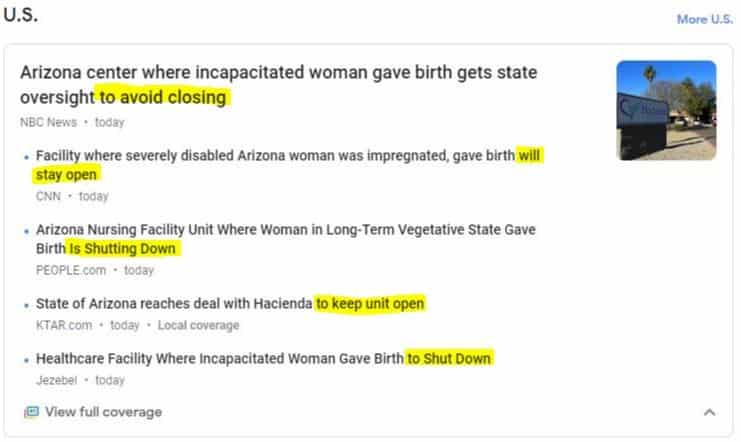

Another trend you see now from large media outlets – aside from lots of typos and grammatical mistakes – is a general laziness when it comes to researching what’s being published. This can partially be explained by the fact that anyone with a blog is now considered a journalist, and the people who recruit them think the title “social media guru” actually means something. Here’s a great example of something we saw just today, which shows how nobody took the time to research a topic prior to vomiting out some half-baked conclusion:

These are major media outlets that all published the same story on the same day – a story which isn’t newsworthy in the first place – and they can’t manage to reach a consensus on something factual they are reporting on. Is this what they call “fake news?” Turns out, that’s another thing that nobody can agree on.

Defining Fake News

Another trendy phrase you’re likely to hear thrown about these days is “fake news,” and we need to be very careful on what we define as “fake news.” It’s similar to how Patreon now has 10% of their staff monitoring their content for “hate speech,” something that now includes “negative generalizations, of people based on their national origin.” In other words, all our “Global AI Race” articles where we take the piss out of nearly every culture out there would be considered “hate speech” by Patreon’s standards.

That’s a very dangerous road to go down, and now we’re starting to see the same thing crop up in “AI bias.” In other words, if we don’t agree with what the AI algorithm tells us, we can just shout “bias” and then change the results. It’s kind of like when some tool disagrees with what you say and calls you a bigot. Ironically, a bigot is defined as “a person who is intolerant toward those holding different opinions.” What we’re getting at here is that we need to be very careful about who gets to decide what “fake news” is.

Every year, the bright minds at CB Insights put together a list of the top-100 AI startups, and this year’s list contains two startups that are said to be using artificial intelligence to address the fake news problem. Let’s take a closer look at each one.

Identifying Fake Images and Video

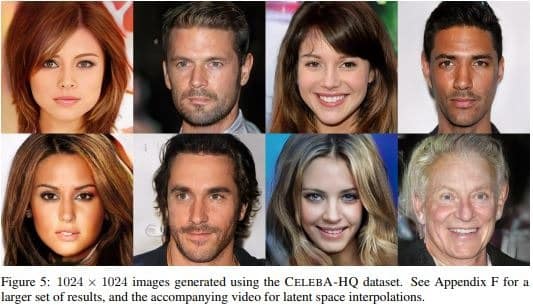

Founded in 2017, San Francisco startup AI Foundation has raised $20.5 million to “move humanity forward through the power of decentralized, trusted, personal AI.” Sounds pretty idealistic and vague, but they’re not just about altruism. One of the tools they are developing will help people identify content that has been generated by artificial intelligence algorithms. For example, many of you have probably seen the very realistic faces that are now being generated by AI algorithms.

AI Foundation has developed a tool called “Reality Defender” which is intelligent software built to run alongside digital experiences (such as browsing the web) to detect potentially fake media. Similar to virus protection, their tool scans every image, video, and other media for known fakes, allows you to report suspected fakes, and uses various “AI-driven analysis techniques to detect signs of alteration or artificial generation.”

Given how quickly fake images and video are improving, perhaps a business model that certifies “what’s real” may be more effective. Soon, anyone will be able to churn out millions of realistic-looking fake pictures and trying to police the world’s media data will be a tough task. Maybe individuals and companies will soon have their own fingerprint that they can apply to the media they produce – whether using AI or not – which shows who the creator was. The company plans to implement this in the form of an “Honest AI watermark,” but should companies really have to disclose that an image has been digitally altered? Adobe uses AI extensively now, so is altering a photo in Adobe photoshop considered an “AI generated image?” Where do we draw the line?

Protecting Brands from Fake News

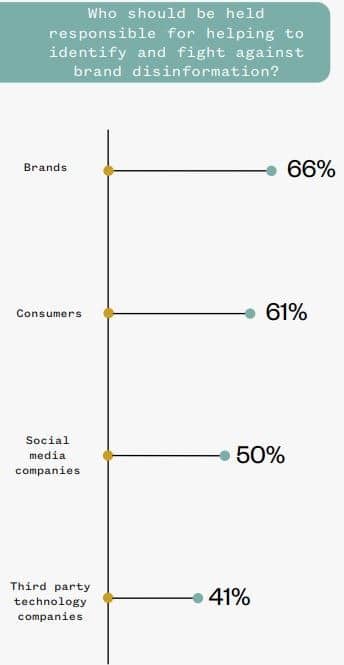

Founded in 2015, New Knowledge is a startup hailing out of Austin, Texas that is “a cybersecurity company specializing in disinformation defense for highly visible brands.” The company has taken in $13 million in funding so far to use machine learning to “detect threats and provide brand manipulation protection before damage is done.” In particular, they work to “detect, monitor and mitigate social media manipulation.” To provide some foundation for their value proposition, they put together a study in which they asked 1,500 people a bunch of questions over three days and determined that a brand’s reputation is pretty important. Nobody disagrees with that, and the company says that oftentimes coordinated efforts will be made to damage a brand’s reputation by “hackers” and therefore the brands should protect themselves.

Consequently, New Knowledge is positioning themselves as a cybersecurity firm that brands will employ to protect their reputations.

Again, we need to be very careful how this sort of technology is being applied. Take, for example, the recent debacle where Procter and Gamble (PG), a company we count on for growing dividend streams, decided it was a good idea to dive headfirst into politics. The company’s PR firm literally spent the first 48 hours after their “short film” was released, deleting negative YouTube comments as fast as their little fingers could type. Were they “combating disinformation,” or suppressing the opinions of angry customers who were pissed off about something some experts are calling “the year’s worst marketing move?”

Conclusion

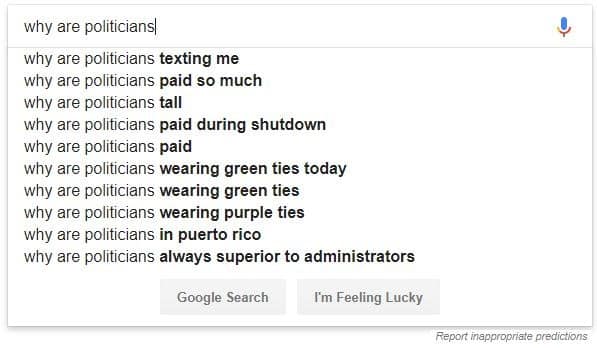

It’s kind of interesting to go to the Google search bar and type in a phrase and then see what it auto-suggests based on popular searches. What this also indicates is what other people think of a particular topic, based on what they search for. Here’s one example using the word “politicians.”

It used to be that you could type in people from various countries and see what common stereotypes exist. For example, you could type “Why are Swiss people ____” and Google would then populate the words that people would search for about Swiss people, and no doubt the first word was “boring.” Google has now removed the auto-suggest function for the majority of searches relating to any culture or country because – presumably – they’re scared someone will complain. (We did find one they missed though, “why are brits____.”) How long will it be until Google starts to decide what is “fake news” and removes it from their search function? Google won’t do business in China because of censorship, so they need to apply those same set of principles internally. So do any of these startups that are now policing “fake news.”

Sign up to our newsletter to get more of our great research delivered straight to your inbox!

Nanalyze Weekly includes useful insights written by our team of underpaid MBAs, research on new disruptive technology stocks flying under the radar, and summaries of our recent research. Always 100% free.